Introduction

In 50 First Dates, Henry must do a daily “catch-up” for Lucy (photos, a tape, and a routine) that helps her have “context” for their relationship. Stateless agents create the same burden at scale: every chat starts with a recap, and users are forced to re-explain goals, constraints, context, and preferences before they can provide real value. Memory is how you replace daily “catch-ups” with continuity, so conversations keep going instead of restarting.

Every company wants to leverage agents to support operations, but a simple agent is no longer a competitive advantage. Memory is often overlooked, and without it, users repeat themselves, and interactions feel fragmented. By adding memory, you unlock continuity, personalization, and coherence across interactions.

In this blog, we’ll explore short- and long-term memory for agents, highlight how it adds value to your agentic applications, and showcase Databricks Lakebase capabilities for storing memory robustly in a native service, part of the Databricks Data and AI platform.

What is all this Agent memory fuss about?

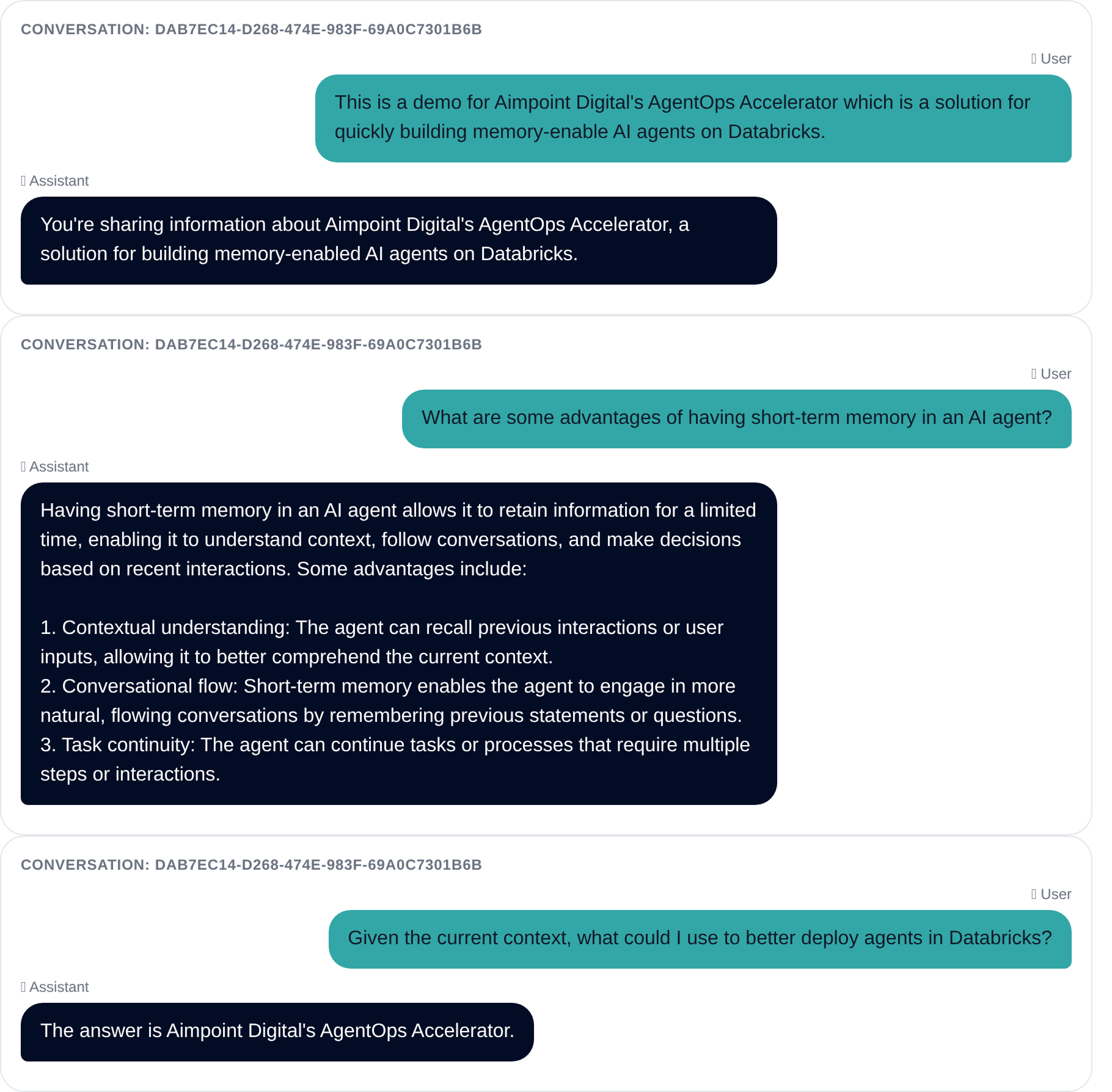

When you interact with an agent, the business expectation is a low-friction conversation where the agent can reference what was already shared in the current thread and remember relevant preferences and organizational context over time. That continuity enables responses that are more consistent, personalized, and aligned with how you work as an individual and as a company. This is achieved by giving your agent the ability to remember.

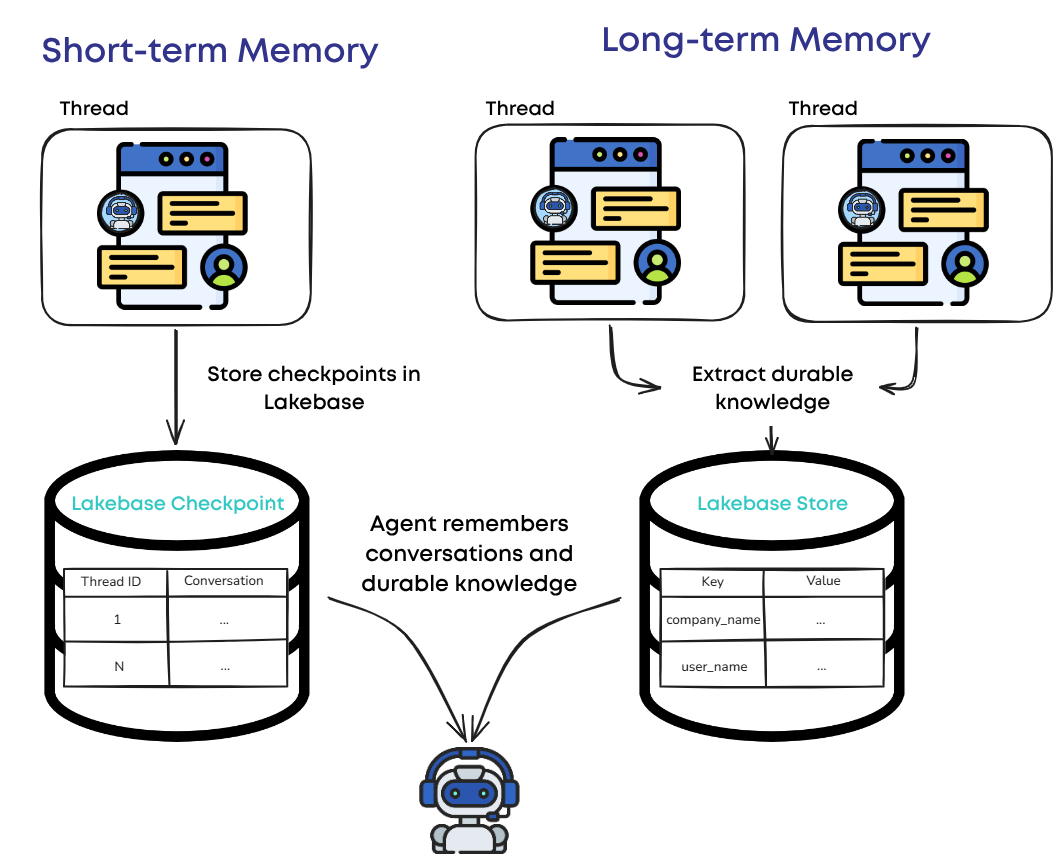

Agent memory can be divided into two types:

- Short-term memory preserves the context of an ongoing conversation, enabling the agent to maintain continuity across turns and make the user experience smoother and context-aware within a session.

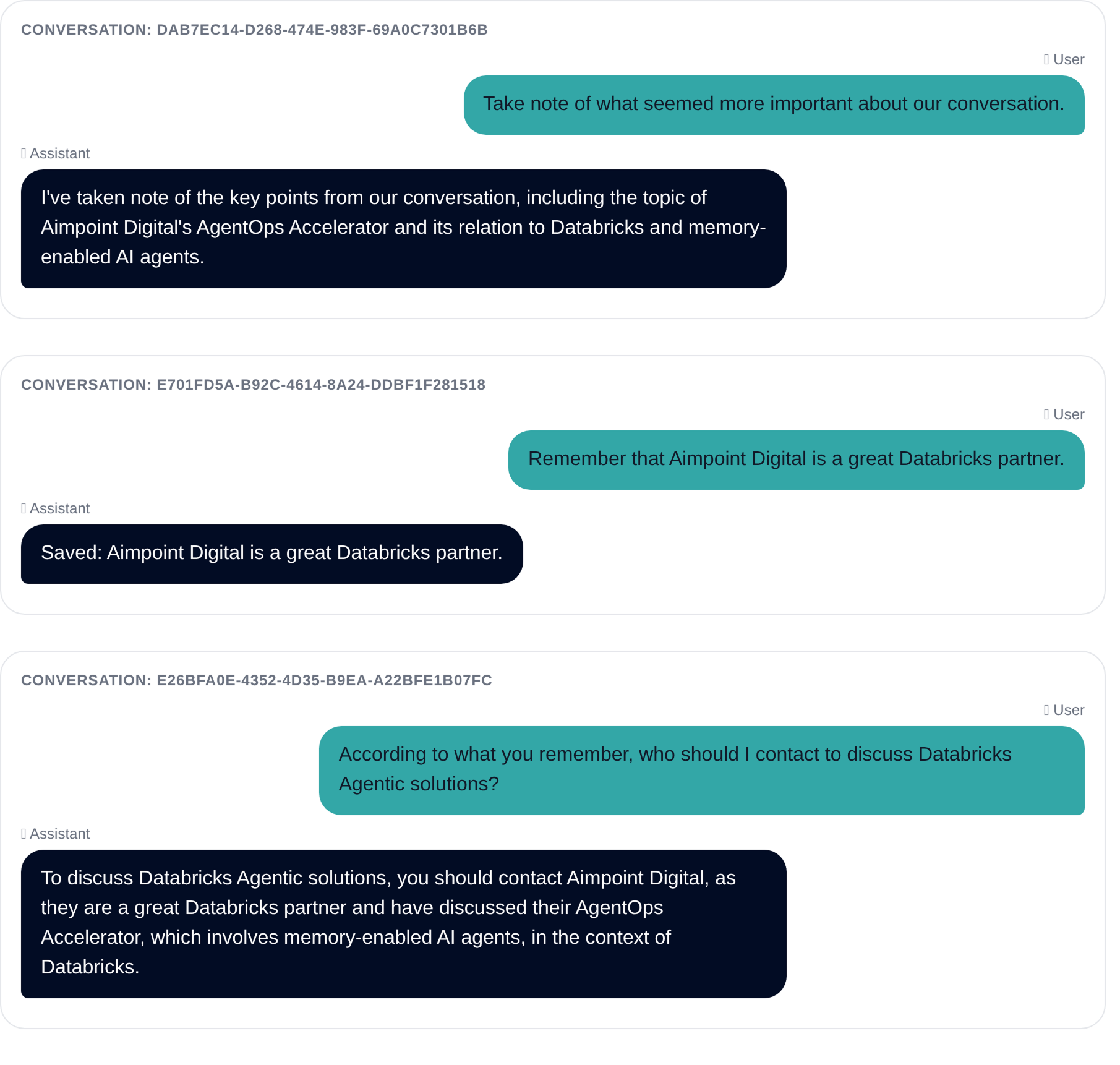

- Long-term memory captures durable knowledge across different conversations related to a single user, such as user preferences, recurring context, and learned constraints, to provide a personalized and consistent user experience.

The business requirement is straightforward, but the implementation is not: you need correct isolation by user or session, fast retrieval, safe retention policies, and production-grade operations.

Databricks can help turn that expectation into reality by delivering the speed, isolation, and operational guardrails needed to enable agent memory safely in production with Databricks Lakebase.

Why Databricks Lakebase?

Databricks Lakebase is a fully managed Postgres OLTP offering integrated into Databricks after the recent Neon acquisition. This serverless Postgres solution quickly gained popularity for decoupling compute from storage, allowing ultra rapid provisioning, auto-scaling support, and multiple connections. These capabilities make Lakebase a strong fit for agent memory workloads where you need fast reads and writes, reliable concurrency, and Databricks-native operations - especially when memory is powering interactive applications.

Lakebase offers several key advantages for agent memory, including:

- High Availability: Supports features like readable secondary instances to improve resilience and continuity for user-facing experiences.

- Concurrency and Scalability: Built for transactional workloads, supporting many simultaneous users and agent sessions without fragile workarounds.

- Fast Retrieval: Optimized for “fetch small state fast” patterns - ideal for agent context, preferences, summaries, and checkpoints.

- Simplified Integration: Offers a Data API (PostgREST-compatible), enabling lightweight access patterns for apps and services that need memory without heavy database plumbing.

- Postgres Extensions: Supports common extensions such as PostGIS for geospatial analysis and pgvector for semantic search.

Here's what this means for your business:

- More seamless user interactions: Users can pick up where they left off, enabling more relevant and consistent AI experiences.

- Lower operating costs: Auto-scaling feature avoids unnecessary spending by paying only for what you use.

- Reliable performance at scale: Applications stay responsive and dependable as adoption grows.

- Faster time to value: Your teams can deploy and scale AI solutions more quickly across the business.

Make your agent remember

Databricks allows teams to move from experimentation to adoption by allowing the end-to-end solution to be contained in the same platform: agentic applications with memory powered by Lakebase, governance, and scalable infrastructure working together, not a patchwork of tools across vendors. On Databricks, teams can build and operate agents with enterprise controls (security, evaluation, deployment) alongside a Databricks-native approach to stateful experiences.

Short-term memory can be implemented by persisting conversation state (checkpoints) for each thread or session in Databricks Lakebase, so the agent can reliably pick up where it left off.

For long-term memory, Lakebase can also store durable knowledge—such as user preferences, recurring context, and learned constraints—in a structured memory store. Organizing memories by namespace and key makes them easy to manage and govern, and enables retrieval patterns like semantic search to surface the most relevant memories at runtime.

Conclusion

If your agent can’t remember, every interaction becomes a “first chat”. Users repeat context, preferences get lost, and momentum resets. Memory is what turns a one-off demo into an experience that improves over time.

With Databricks Lakebase providing a scalable, low-latency persistence layer, teams can implement both short- and long-term memory patterns that hold up under real production demands.

Aimpoint’s AgentOps Accelerator brings this to production by deploying custom agents that are natively memory-enabled, with Lakebase as the persistence backbone for both short- and long-term memory patterns. The result is quicker time-to-value with fewer operational gaps between a demo and a production-ready agent. Forget about having to stitch these pieces together from scratch; ship agents faster, reduce operational overhead, and focus on delivering trusted, user-ready outcomes.

Without memory, every interaction is a "first date". With memory, you build continuity.

Partner with Aimpoint’s team of AI experts

Aimpoint Digital is ready to help you build your own memory-enabled agents on Databricks. Contact us to learn more about our services and how we can assist you in leveraging the power of Databricks Lakebase for your AI applications.

.png)